The question “Should I use AI recruiting tools or hire manually?” is the wrong question. AI handles searching. Humans own judgment. The companies getting this right use both for completely different things and achieve 90% retention at 18 months. The companies getting it wrong automate their broken process and make bad decisions faster.

What AI Genuinely Does Well

AI excels at three categories of hiring work: tireless searching, instant filtering, and pattern recognition at scale.

A human recruiter reviews 20 to 30 profiles in an hour before fatigue sets in. AI analyzes 500-plus profiles while you sleep, across platforms you’d never manually check: LinkedIn, GitHub, niche industry forums, conference speaker lists, and professional communities.

It never gets tired. It never says “I can’t look at another resume today.”

Wrong location? Wrong experience level? Missing a non-negotiable requirement? AI eliminates these mismatches in seconds. No human needs to spend time evaluating a junior developer when you’re hiring a senior architect. This isn’t judgment. This is math.

When you’ve tracked hundreds of hires over 18 months, AI can identify which combinations of experience, background, and behavioral signals correlate with success in your specific environment. Humans see individual stories. AI sees systems.

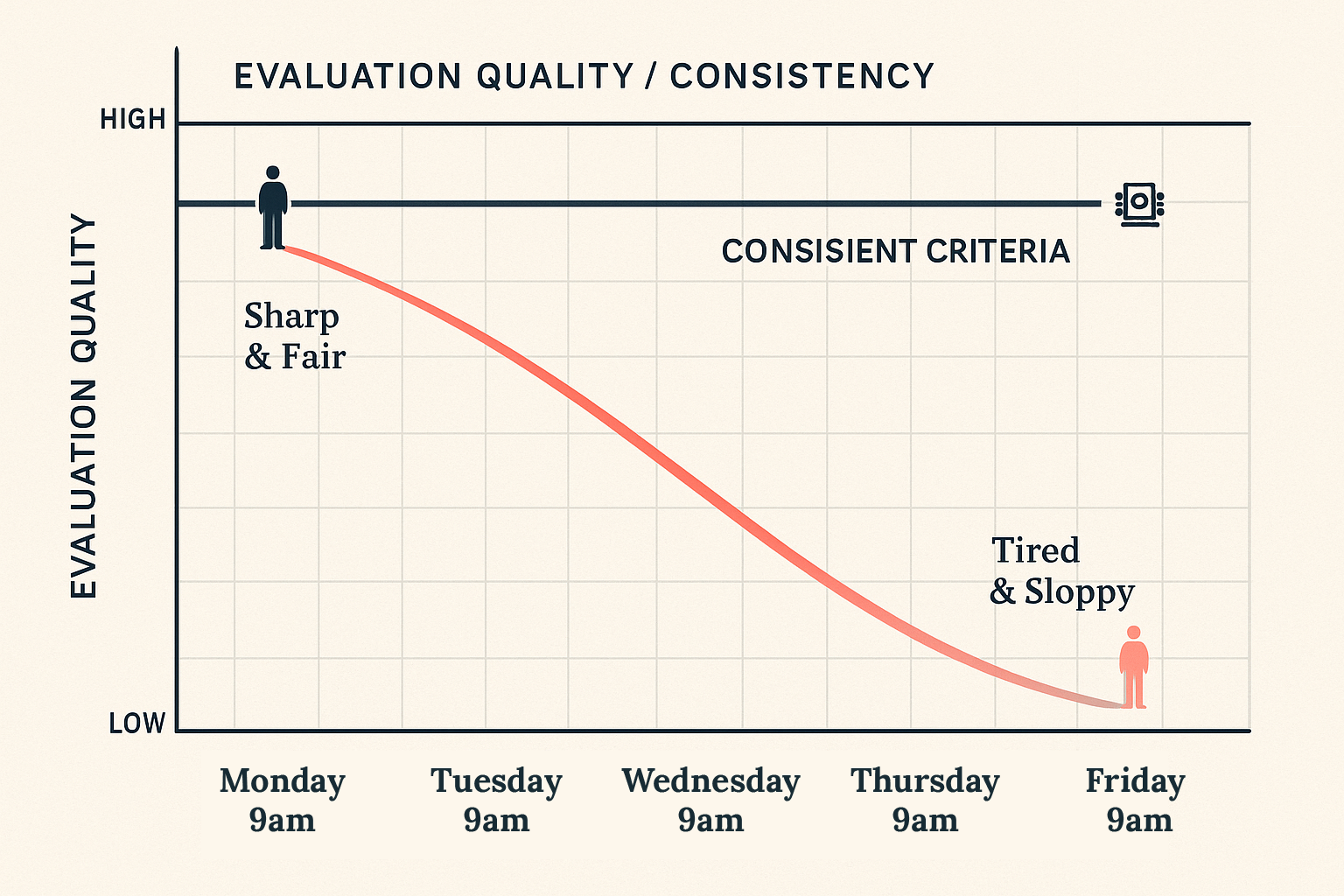

AI also applies the same criteria every single time. It doesn’t get sloppy at 4pm on Friday. It doesn’t give extra points to people who attended the same university. For high-volume, low-judgment work, AI is superior to human effort in every measurable way.

What AI Cannot Do

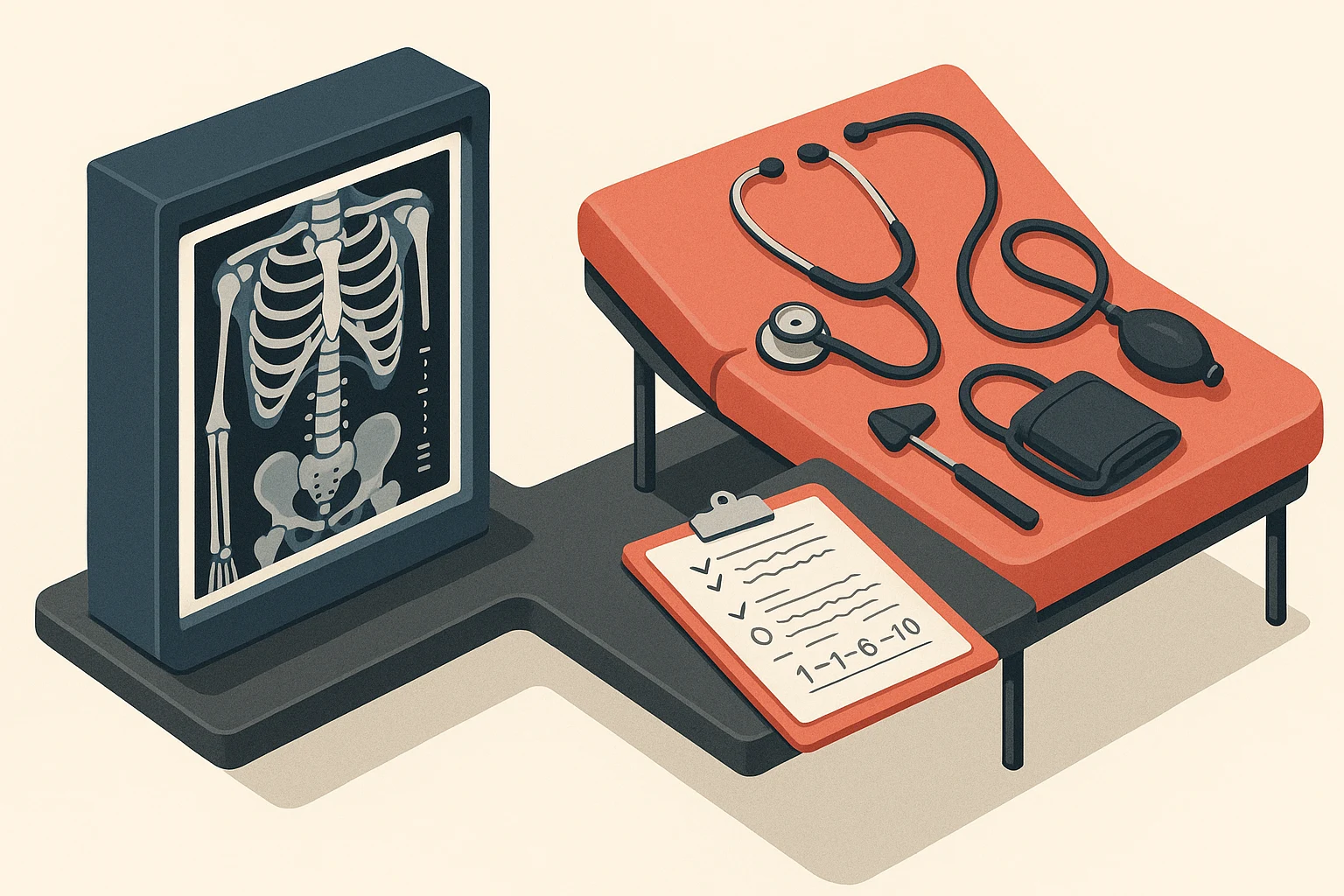

AI cannot understand context beyond the data. A resume says “software engineer at Company X.” AI sees a keyword match. A human asks: Was that a 10-person startup or a 10,000-person enterprise? What was the team structure? Why did they leave?

Context isn’t on the resume. Context is in the conversation.

AI cannot build relationships with passive talent. The best candidates aren’t scrolling job boards. They’re busy being excellent at their current jobs. Convincing them to care about what you’re building requires real conversations about what motivates them beyond compensation.

AI cannot apply judgment to edge cases. The candidate who left three jobs in two years? AI sees a red flag: job hopper, reject. A human discovers each company ran out of funding. The candidate was loyal until the money stopped.

The candidate with the “wrong” educational background who taught themselves everything? AI rejects on credentials. Humans see a self-driven learner with proven capability.

AI cannot evaluate cultural chemistry. Will this person make your team better or worse? Will their communication style mesh with your existing dynamics? These questions require watching someone solve problems in real time, handle feedback, and interact with your team.

No algorithm produces this.

The Automation Trap

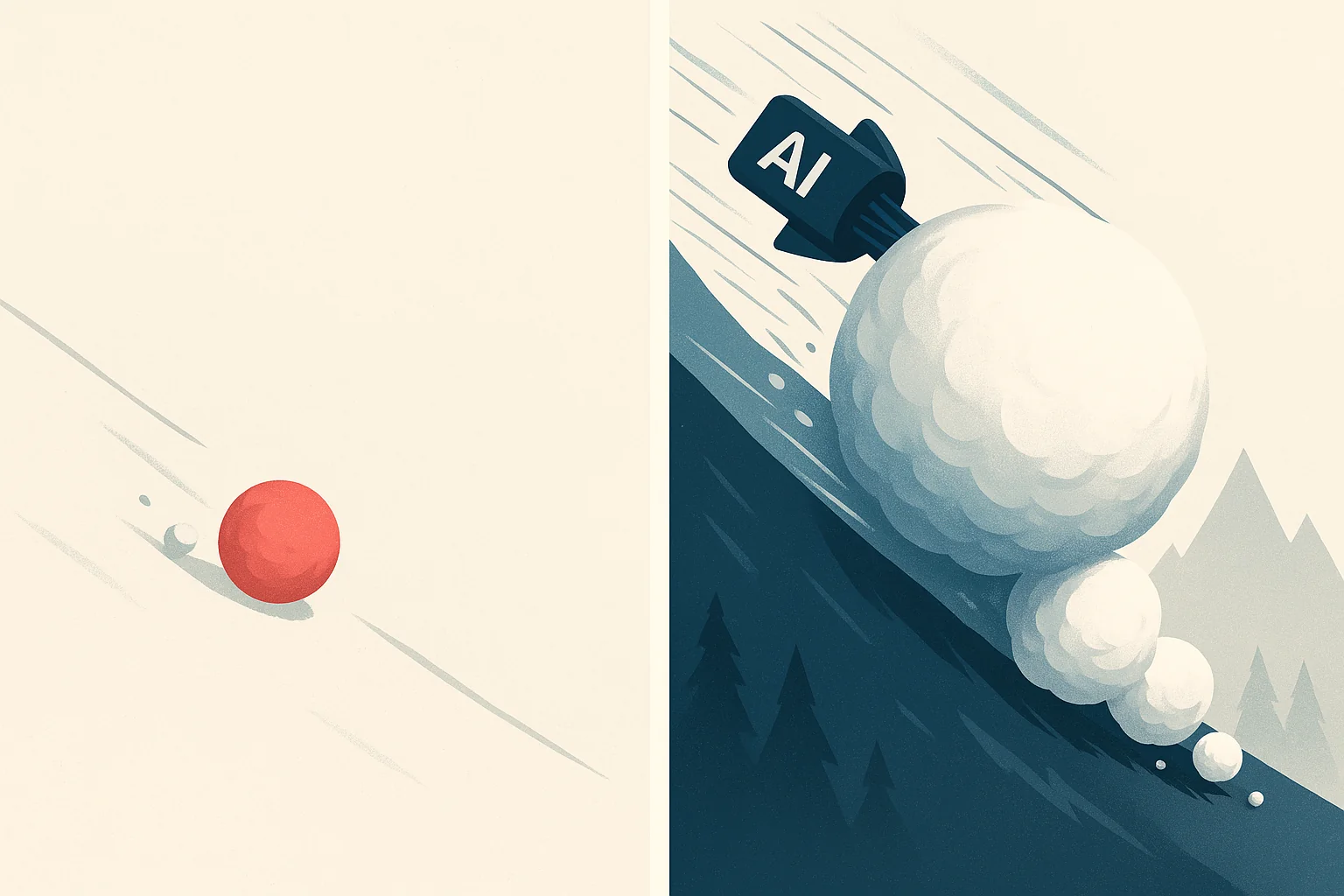

If your manual process focuses on the wrong things, automating it with AI makes things worse faster.

This is the trap most companies fall into, and it’s the reason AI recruiting tools have a reputation problem.

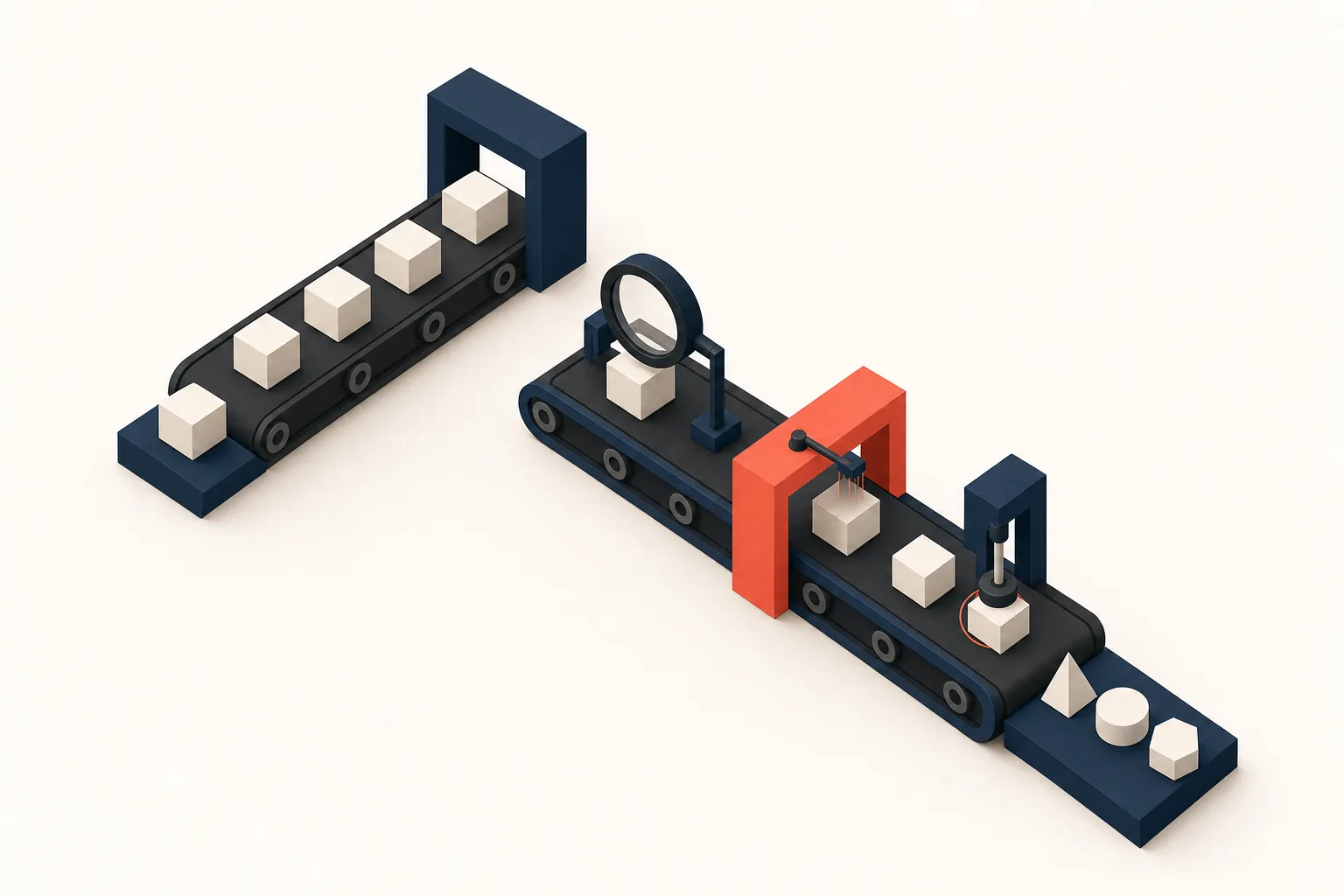

Most AI recruiting tools are built on keyword matching. They scan resumes for terms that appear in your job description, rank candidates by match percentage, and present a sorted list.

This sounds efficient until you realize it automates the exact failure mode that produces bad hires: surface-level evaluation.

Keyword matching doesn’t reveal whether someone will thrive in your specific environment. It doesn’t reveal what they want from work, what motivates them, or how they collaborate under pressure. It tells you their resume contains the right words. That is not the same thing.

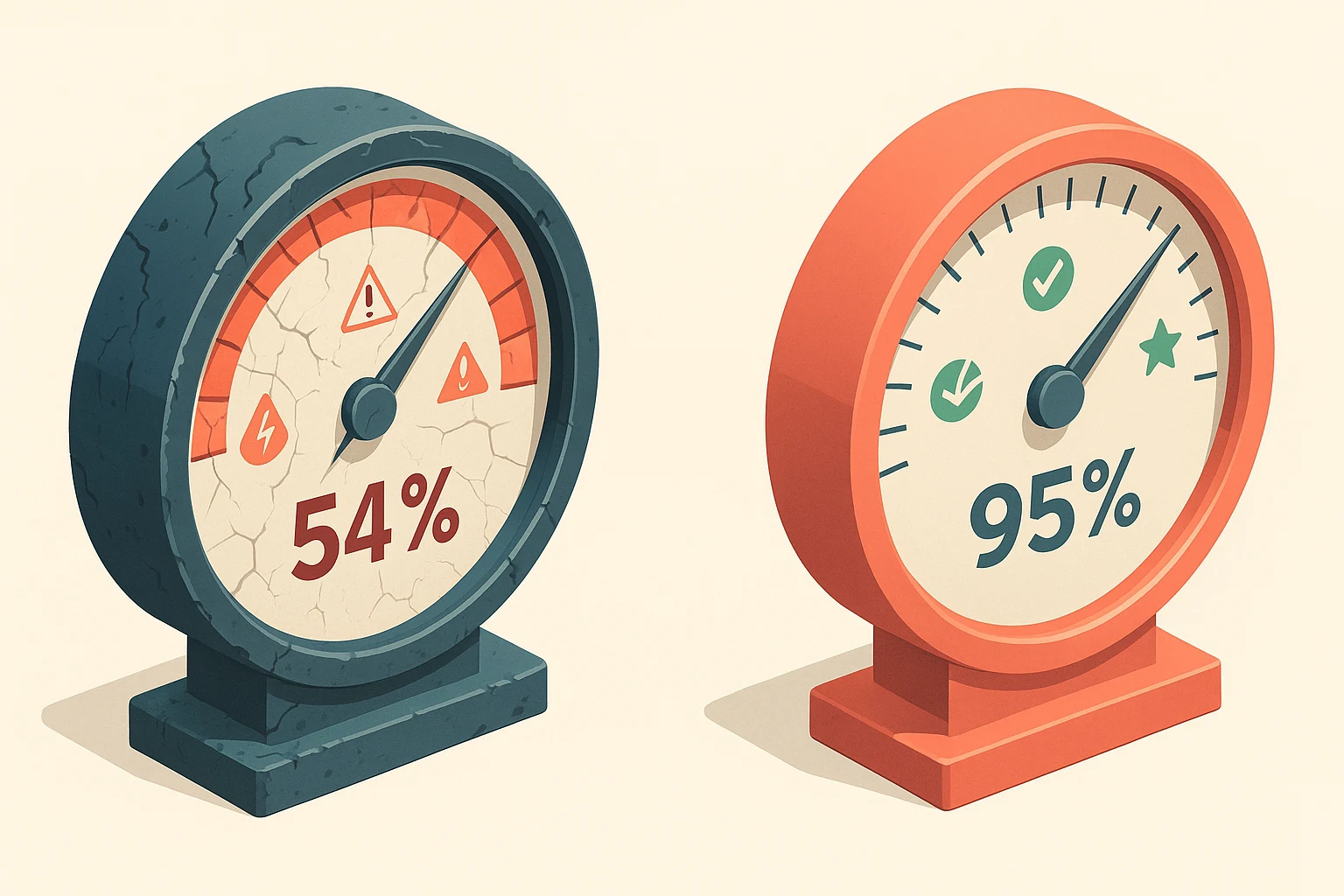

Industry average retention at 18 months hovers around 58%. Nearly half of those “fast hires” don’t last.

When you resist AI recruiting tools, you’re protecting something real: context, relationships, cultural chemistry, and judgment on edge cases. Those instincts are correct. The problem isn’t that you should abandon those instincts. The problem is that you’re spending your time on manual work AI could handle, leaving less time for the judgment calls only humans can make.

The Partnership Framework

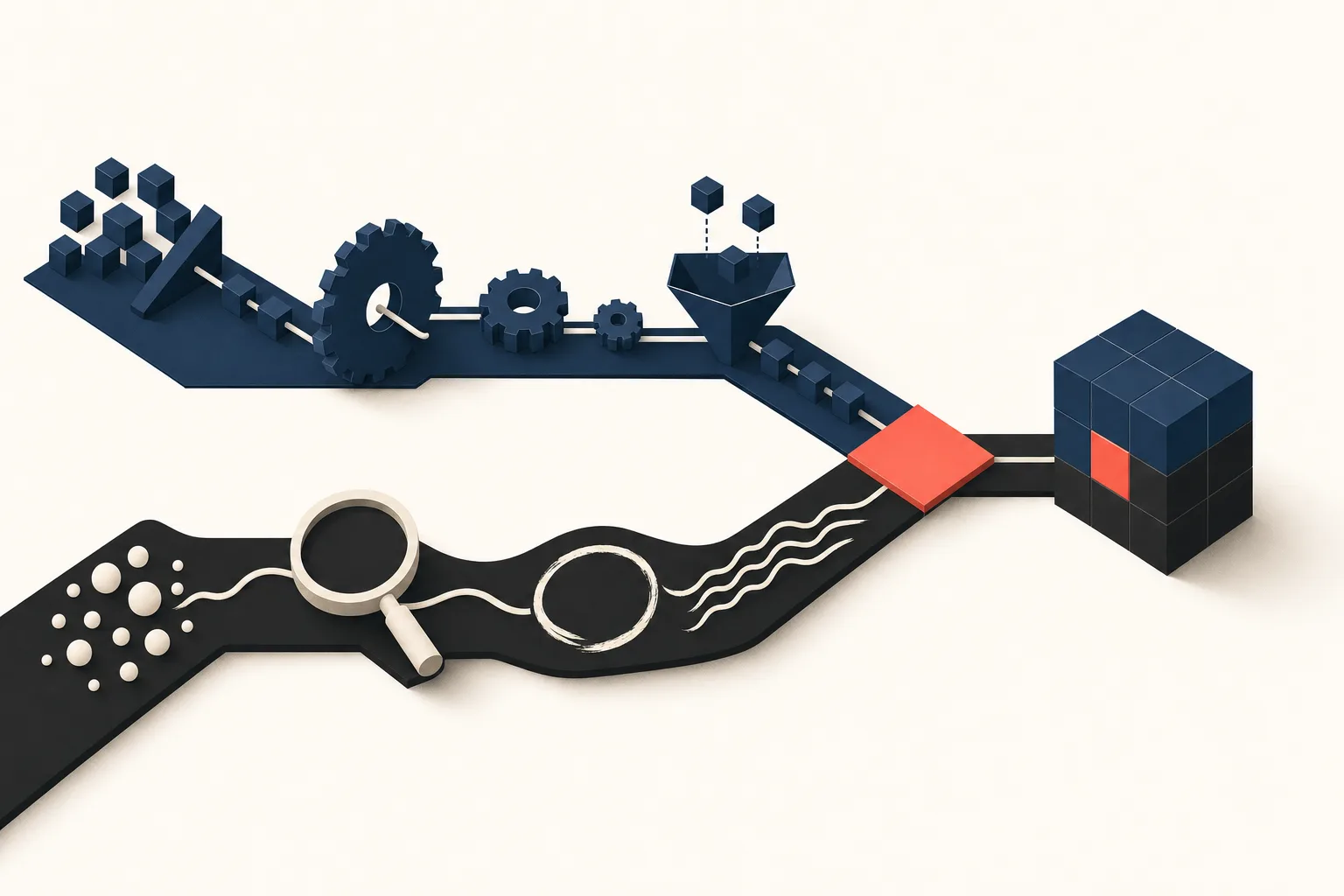

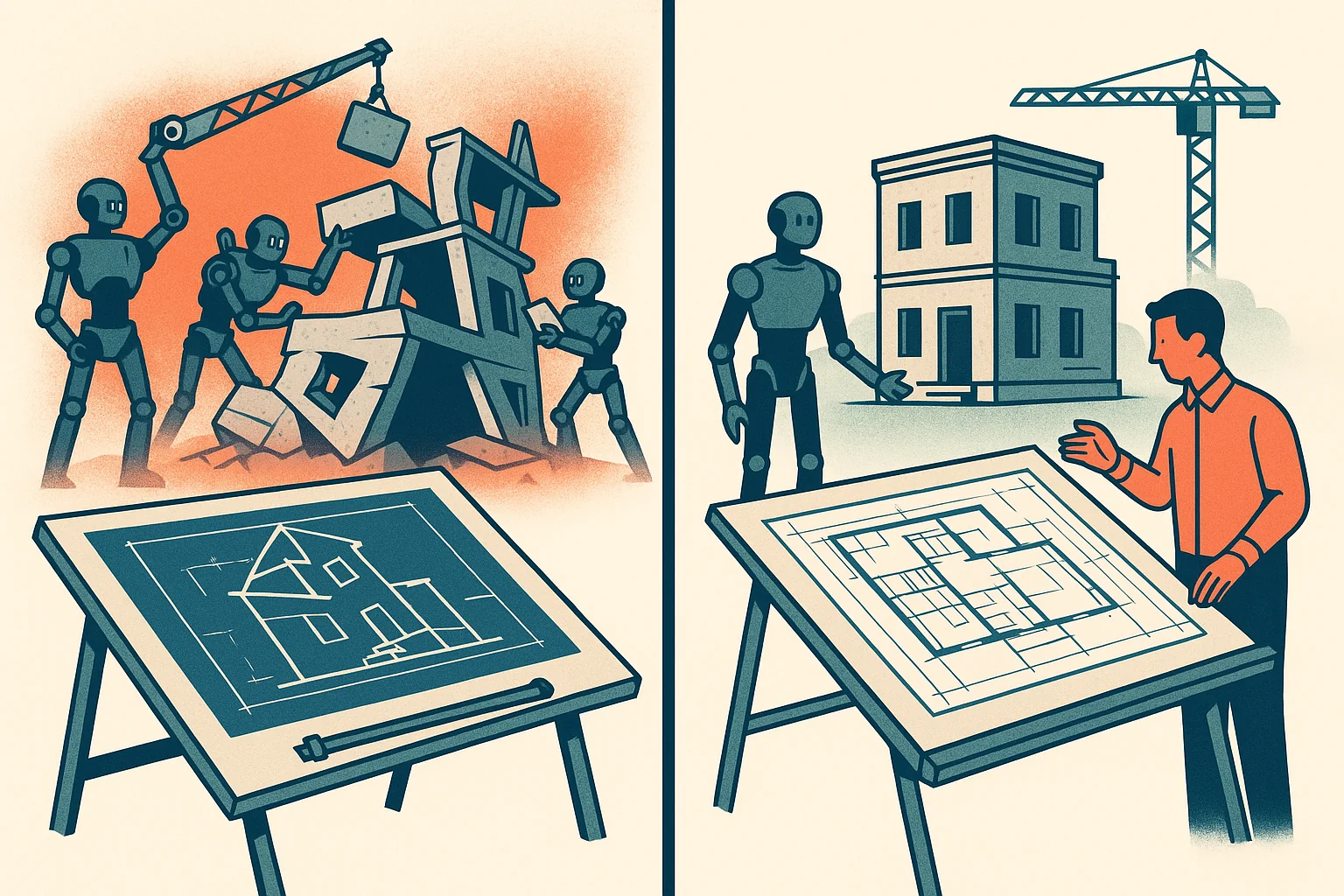

The framework that works is precise: AI does the searching. Humans own every judgment call. The division is non-negotiable.

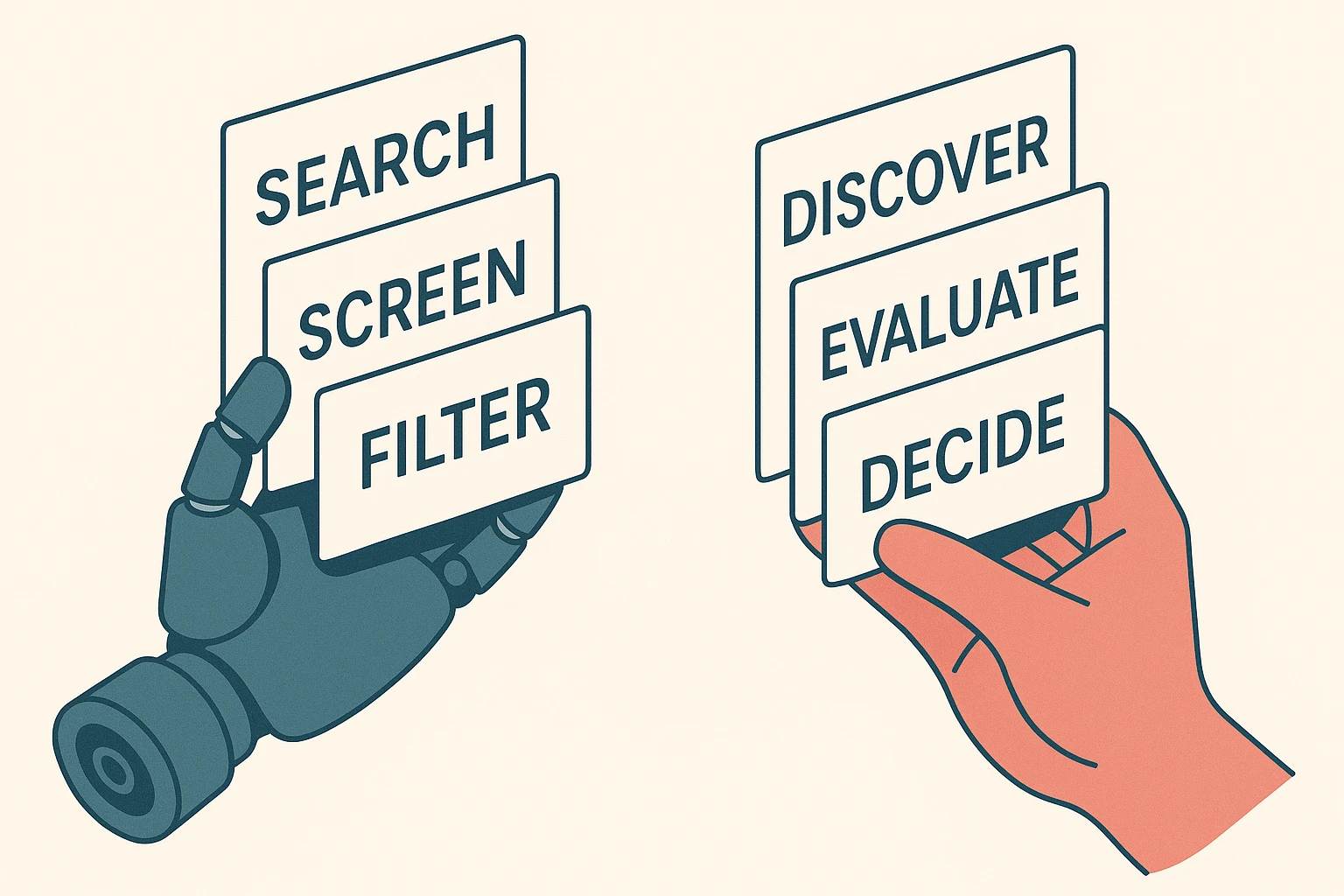

AI’s job: Search everywhere, 24 hours a day, across platforms you’d never manually check. Analyze 500-plus profiles per role. Filter obvious mismatches. Present candidates who match the profile that humans defined.

Humans’ job: Conduct discovery to define what “match” actually means. Map the 32 Work Drivers that predict success in this specific role. Evaluate presented candidates in full context. Have real conversations. Build relationships. Make every decision that matters.

The critical question for evaluating any AI recruiting tool:

Does this tool help humans make better judgments, or does it make judgments for humans?

Good AI extends human capability. It presents candidates with context so humans can evaluate them better. It expands the candidate pool. It eliminates busywork so humans can focus on high-judgment activities.

Bad AI replaces human judgment. It auto-ranks candidates. It narrows your pool to “best fit” based on keyword matching. It replaces discovery and relationship-building with automation.

Watch for red flags in vendor pitches. “Our AI will find your perfect candidate” means keyword matching dressed up as intelligence. “Eliminate bias with our algorithm” means their biases are hidden instead of visible. “Set it and forget it” means they don’t understand that hiring requires ongoing human judgment.

The green flags: “AI finds, humans decide.” “Expands your reach to passive candidates.” “Requires human oversight at key decision points.”

What This Looks Like in Practice

The AI-human partnership works inside a discovery-led hiring process, week by week.

Week 1: Discovery (100% Human)

Before AI touches anything, humans do the foundational work. Thirty to forty questions map what you actually need.

Not “we need a marketing manager.” What does success look like at 30, 60, and 90 days? Who will this person work with daily? What behaviors make someone successful in this specific seat?

This produces the Right Person Profile, including 32 Work Drivers across functional, social, and emotional dimensions. AI can’t do this work. It requires conversations, follow-up questions, and the ability to hear what someone isn’t saying explicitly.

Weeks 2 through 4: AI-Powered Search

Now AI kicks in, guided by the discovery framework humans created. It searches across LinkedIn, GitHub, industry forums, conference speaker lists, and niche communities. It analyzes 500-plus profiles to find the handful that match.

Humans review every AI-presented candidate with full context. Not just “do they have the skills” but “will they thrive here, with this team, doing this specific work?”

Weeks 5 through 7: Human-Led Evaluation

AI has done its job. Now humans do theirs. Personalized outreach, not templates. Real conversations about what candidates want from work.

Discovery calls assess alignment on Work Drivers, not just skills. Humans evaluate cultural fit through conversations, watching how candidates think, communicate, and approach problems.

Weeks 8 through 10: Proving Before Committing

Finalists complete paid Work Simulations. Not generic assessments. Bespoke challenges built from actual problems the company faces.

Candidates get paid for this work because real work deserves real compensation. Work sample tests predict job performance three times better than unstructured interviews (Schmidt & Hunter, 1998, Psychological Bulletin). This is 100% human judgment: watching how someone thinks, how they handle ambiguity, whether their approach meshes with the team.

The result: 90% of placements still thriving at 18 months, backed by a 120-day guarantee.

Not because AI is magic. Because discovery-led hiring eliminated the busywork so humans could focus on the judgment calls that actually determine whether a hire succeeds or fails.

What You Can Do This Week

Three changes you can make immediately, without hiring anyone.

First, audit where your time goes. List every hiring activity you do manually: sourcing, screening, scheduling, discovery conversations, cultural evaluation, final decisions. Which are low-judgment tasks that AI could handle? Which are high-judgment tasks that must stay human?

If you’re spending 10 hours a week screening resumes and 2 hours on discovery conversations, you have it backwards.

Second, fix your evaluation framework before automating anything. Can you articulate what success looks like at 30, 60, and 90 days for your open role? Can you name the specific behaviors that make someone successful in your environment?

If you can’t answer these questions clearly, AI won’t help. It will automate your confusion.

Third, evaluate every AI tool with one question. “Does this help humans make better judgments, or does it make judgments for humans?” This separates tools that extend your capability from tools that replace your thinking.

The path forward isn’t AI replacing humans. It isn’t humans working entirely manually. It’s partnership. AI doing what AI does best. Humans doing what humans do best. And companies building teams where everyone makes everyone better.